Bear in mind when Elon Musk made Bitcoin crash by tweeting that Tesla would cease accepting it as a consequence of environmental considerations, and everybody was anxious in regards to the environmental impression of proof-of-work mining? That was in 2021, and degens haven’t forgotten.

But at present, Musk’s xAI is constructing what is perhaps the world’s largest AI supercluster, with governments speeding to create legal guidelines to spice up AI innovation—whereas hardly anybody is questioning the power consumption.

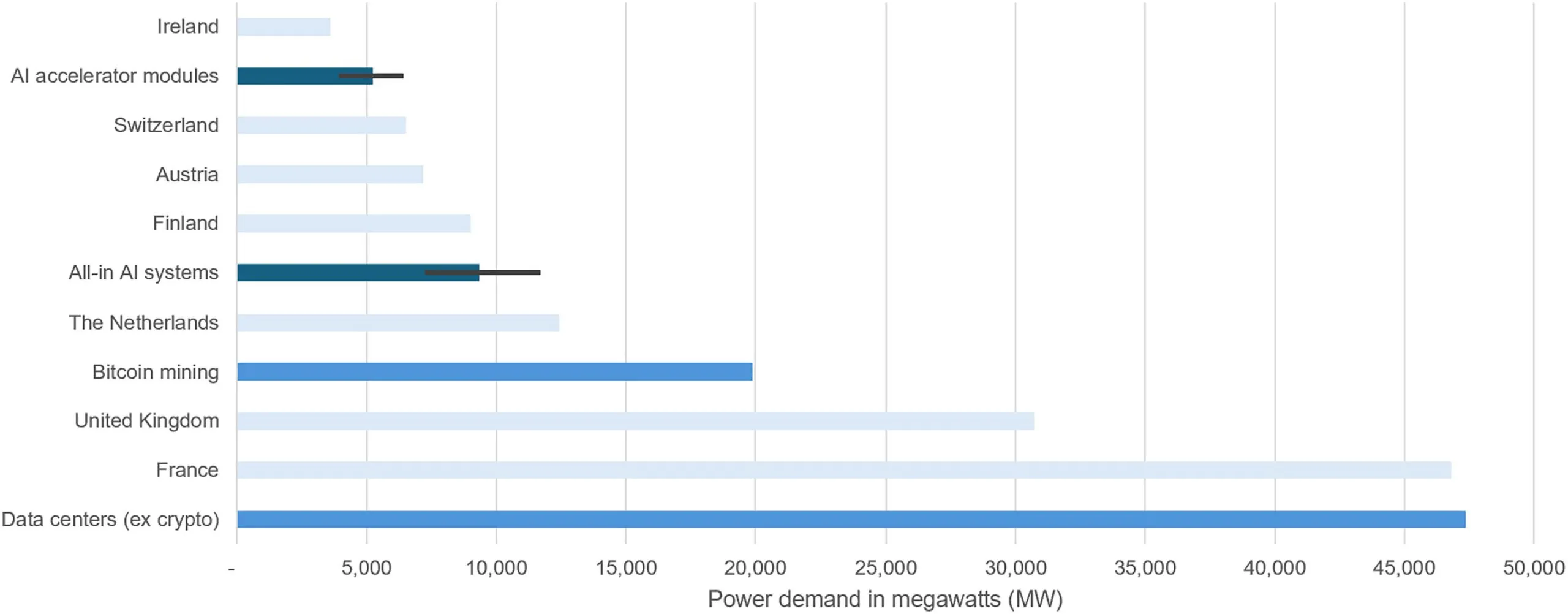

A brand new peer-reviewed analysis paper printed within the scientific journal Joule revealed that synthetic intelligence may account for as much as 49% of world knowledge middle electrical energy utilization by the tip of 2025—surpassing even Bitcoin’s infamous power urge for food.

Alex de Vries-Gao, a PhD candidate at Vrije Universiteit Amsterdam and longtime Bitcoin power consumption critic, discovered AI’s energy demand may hit 23 gigawatts by January 1, equal to about 201 terawatt-hours yearly. Bitcoin at the moment consumes round 176 TWh per 12 months.

Picture: Joule

“Large tech corporations are effectively conscious of this pattern, as corporations resembling Google even point out having confronted a ‘energy capability disaster’ of their efforts to increase knowledge middle capability,” de Vries-Gao wrote on LinkedIn. “On the identical time, these corporations want to not speak in regards to the numbers concerned.”

“Since ChatGPT kicked off the AI hype, we’ve by no means seen something like this once more,” he added. “Because of this, it stays just about unimaginable to achieve a superb perception into the precise power consumption of AI.”

In contrast to Bitcoin’s clear power consumption, which anybody can calculate from the community hash charge, AI’s energy starvation is intentionally opaque. Corporations resembling Microsoft and Google reported rising electrical energy consumption and carbon emissions of their 2024 environmental stories, citing AI as the primary driver of this development. Nevertheless, these corporations solely present metrics for his or her knowledge facilities in whole, with out particularly breaking out AI consumption.

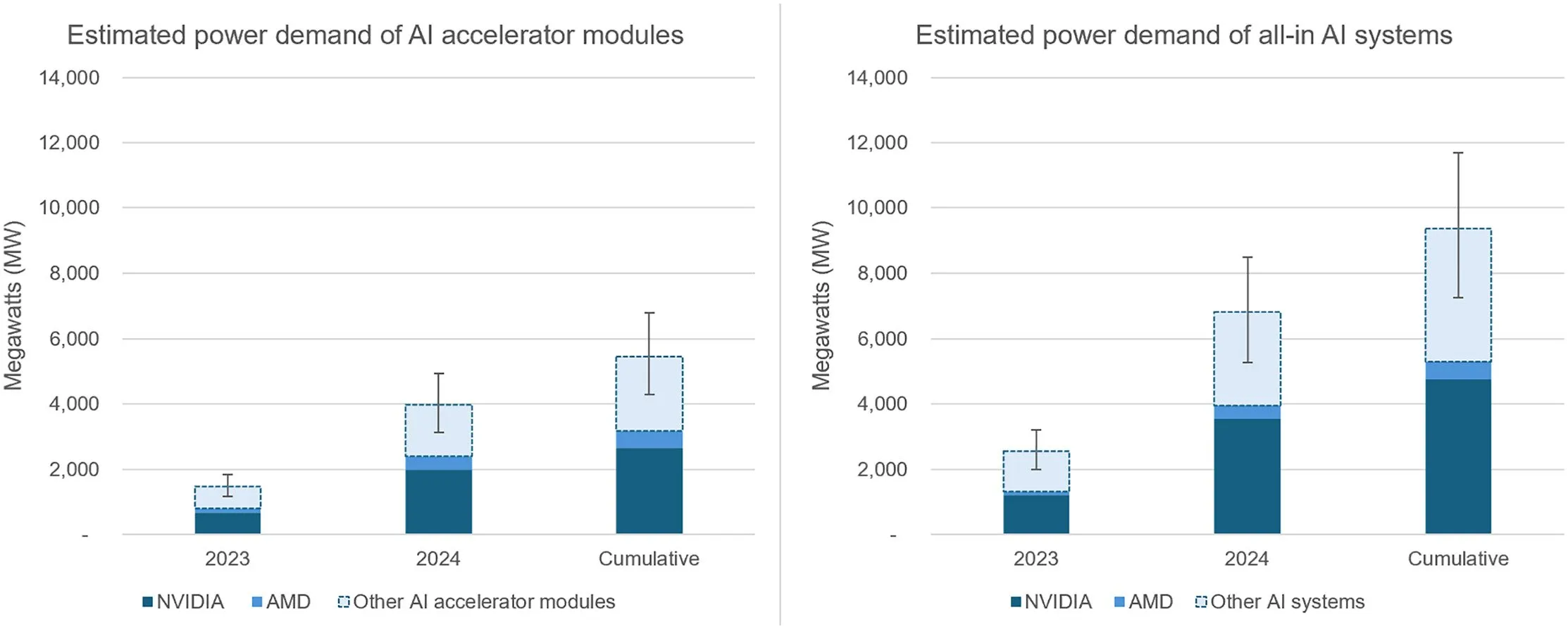

For the reason that tech giants refused to reveal AI-specific power knowledge, de Vries-Gao adopted the chips. He tracked Taiwan Semiconductor Manufacturing Firm’s chip packaging capability, since just about each superior AI chip requires its know-how.

The mathematics, de Vries-Gao defined, works like a enterprise card analogy. If you know the way many playing cards match on a sheet and what number of sheets the printer can deal with, then you may calculate whole manufacturing. De Vries-Gao utilized this logic to semiconductors, analyzing earnings calls the place TSMC executives admitted to “very tight capability” and being unable to “fulfill 100% of what clients wanted.”

His findings: Nvidia alone used an estimated 44% and 48% of TSMC’s CoWoS capability in 2023 and 2024, respectively. With AMD taking one other slice, these two corporations may produce sufficient AI chips to devour 3.8 GW of energy earlier than even contemplating different producers.

Picture: Joule

De Vries-Gao’s projection confirmed AI hitting 23 GW by finish of 2025, assuming no extra manufacturing development. TSMC has already confirmed plans to double its CoWoS capability once more in 2025.

Energy demand is unlikely to decelerate. Nvidia and AMD introduced file income, whereas OpenAI introduced Stargate, a $500 billion knowledge middle enterprise. Certainly, AI is essentially the most worthwhile enterprise within the tech business, with any of the highest three tech corporations on the planet surpassing the full market capitalization of the complete $3.4 trillion crypto ecosystem.

So the atmosphere will in all probability have to attend.